|

11/16/2023 0 Comments Online cpu benchmark comparison Chaos Group V-Ray CPU Benchmark (1.0.8).Unit of measurement: Render time in minutes (lower is better). GNU Compiler Collection (GCC) version 7.4.0, compiling 8.2.0 on Windows 10.

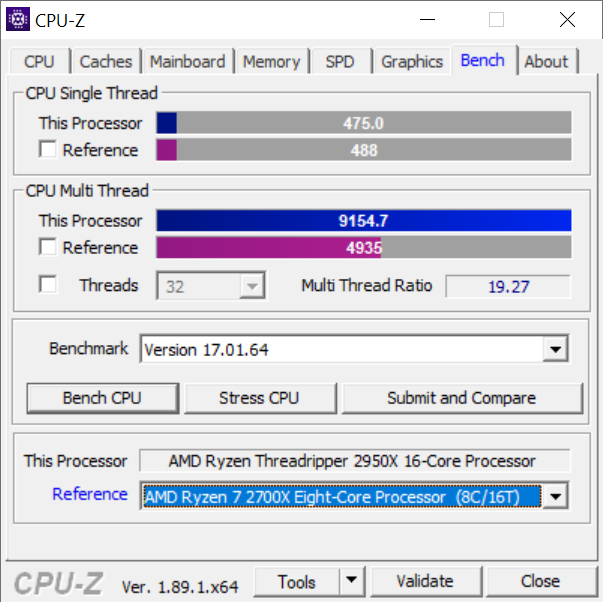

Blender 2.79 GN Monkey Heads render (CPU-targeted workload with mixed assets, transparencies, and effects).Unit of measurement: Render time in minutes (lower is better) Blender 2.79 GN Logo render (frame from GN intro animation, heavy on ray-tracing).Thread count equals the CPU thread count. 7-ZIP dictionary size is 2^22, 2^23, 2^24, and 2^25 bytes, 4 passes and then averaged.Unit of measurement: MIPS (millions of instructions per second higher is better) 7-ZIP Decompression benchmark (version 1806 圆4).7-ZIP Compression benchmark (version 1806 圆4).Our workstation benchmarks include the following tests: We are also detailing more explicitly the unit of measurement in text, although our charts typically do this as well. We are beginning to spend more effort publicly documenting the exact versions of our tests, hoping that this is helpful to those reading our tests. Our CPU testing methodology is split into two types of benchmarks: Games and workstation workloads, but every CPU which is sufficiently high-end will go through both sets of tests. This is a 'pilot episode' of our new workstation testing! CPU Test Methodology We try to keep them running relatively non-stop and around the clock when we’re working on CPU content. A full run on the test suite, including games (not featured today), takes approximately 8 hours per CPU, plus another ~8 hours for the overclocked variant. Most of these CPUs were also overclocked for a second pass through the entire test suite. Here’s the list of initial CPUs we tested, with more to be added as we go: For this reason, we have an eclectic mix of CPUs, but did put most of our emphasis on testing the R7 2700(X), i9-9900K, and R5/i7 CPUs, as we know these are the most interesting to our audience. Our goal for this testing was to get a relatively wide sweep of CPUs tested so that we could find any potential shortcomings of the testing approach. This test suite has gone through a few months of validation, so it’s time to try it out in the real world. It takes us about 6 months to a year to change our testing methodology, as we try to stick with a very trustworthy set of tests before introducing potential new variables. We understand that many of you have other requests still awaiting fulfillment, and want you to know that, as long as you tweet them at us or post them on YouTube, there is a good chance we see them.

We’ve had a lot of requests to add some specific testing to our CPU suite, like program compile testing, and today marks our delivery of those requests. As new CPUs launch, we’ll continue adding their most immediate competitors (and the new CPUs themselves) to our list of tested devices. We don’t yet have a “full” list of CPUs, naturally, as this is a pilot of our new testing procedures for workstation benchmarks. We’re starting with a small list of popular CPUs and will add as we go. Today is the unveiling of half of our new testing methodology, with the games getting unveiled separately. These are new for us, but we’ve added program compile workloads, Adobe Premiere, Photoshop, compression and decompression, V-Ray, and more. New testing includes more games than before, tested at two resolutions, alongside workstation benchmarks. This is an exciting milestone for us: We’ve completely overhauled our CPU testing methodology for 2019, something we first detailed in our GamersNexus 2019 Roadmap video.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed